Building with Open Source

In our new office!

🐙 Hi friend, how’s it going?

We just moved into a new office! 🎉

From our windows, you can actually see the reflection of the sea bouncing off nearby buildings. Not a full ocean view yet 😂 Maybe next year we’ll level up to the real thing.

I’ve also gotten really into making tibicos (aka water kefir). This means I’ve been spending many hours cutting fruit, filling jars and watching things ferment on my counter. Ah yes, I just alternate between coding and staring at the fermentation these days.

Our office currently looks like a science experiment. Amazing. 🍓🫧

🌻 Interesting Things (I think)

A range of things that I find interesting!

🖊️ Learning

🤖 AI

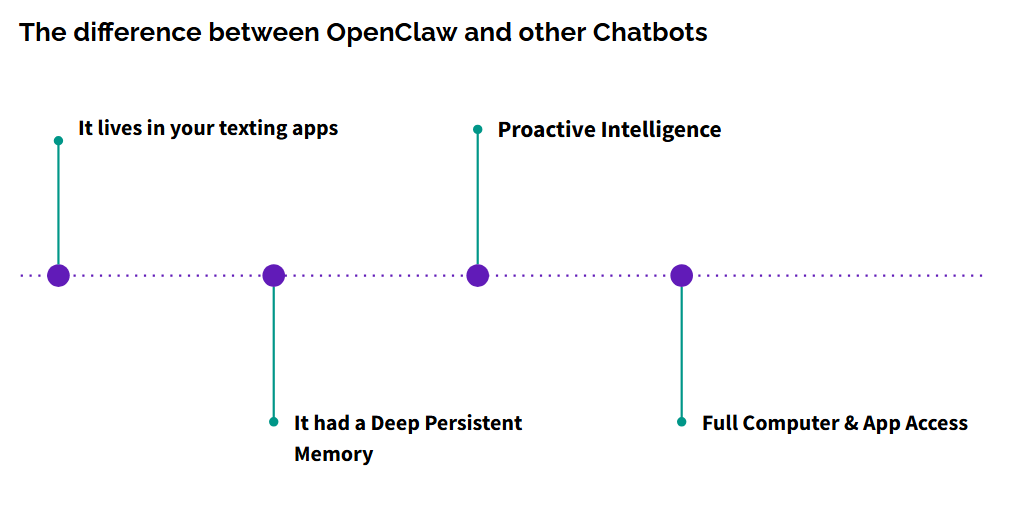

OpenClaw & the agentic shift

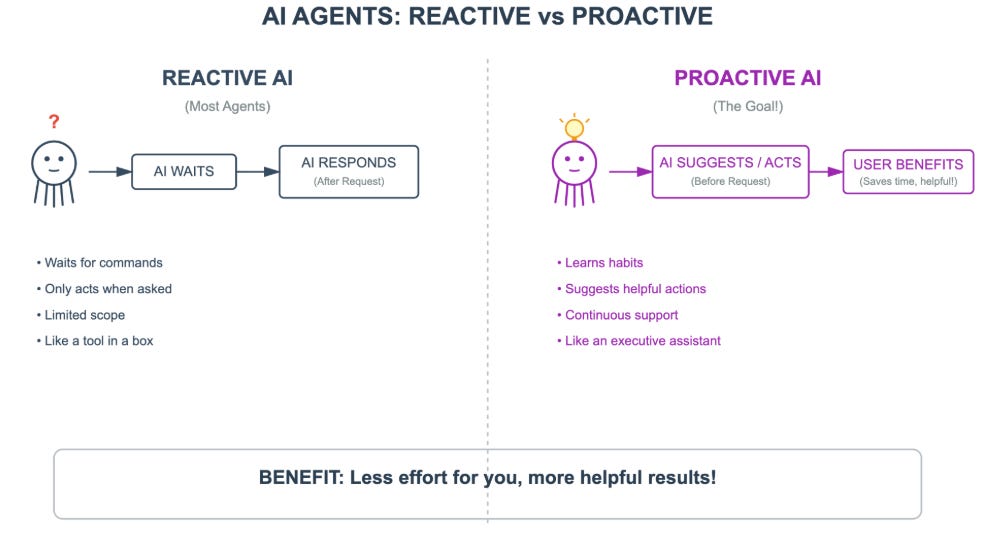

OpenClaw has been everywhere lately. While there’s definitely hype, I think it also signals something real: agentic systems are becoming much more accessible and affordable. It feels like a step toward AI that is proactive across tools, not just reacting to prompts.

That said, this also raises real questions around privacy, permissions and control. I’ve been playing around with it, but very intentionally and with caution. Curious to see how developers build on top of this next. It feels like when vibe coding came out and it was very much not perfect, but it was the start of a shift (which it was).

⚙️ Building

Automation agents for real life

I’ve been building small automation agents for my own life. Starting with a finance document agent to help with bookkeeping and expense tracking. It’s not glamorous but it’s genuinely painful work for me, which makes it the perfect thing to automate. I’m building with a simple rule: build for problems that annoy you daily. That’s usually where the highest leverage is!

🎭 Anime

Rakugo & storytelling

I’ve been watching the anime Rakugo recently, which is all about the art of storytelling. It talks about how stories evolve and how tradition adapts over time. Highly recommend if you like slower, reflective shows with a lot of heart.

🤝 Sponsored

Devs don’t just run one AI coding agent anymore! We’re running 3 - 4 at once and…hitting a wall. 🧱

That’s why Oz caught my attention. Oz is Warp’s new cloud coding agent that lets you run as many agents as you want, all orchestrated from one place. Instead of juggling local setups, Slack threads and schedulers, Oz gives you a single control panel to spin up isolated cloud environments, run agents across multiple repos and even schedule proactive work like doc updates or cleanup tasks.

What I really like is that Oz doesn’t just respond when you ping, it can run in the background, trigger on events and notify you when something’s ready. And if an agent goes sideways, you can jump into the conversation, steer it or fork the work locally into a PR.

This feels like the natural next step for agentic dev workflows: not “one smart agent,” but orchestrating many agents at scale. If you’re already using agents locally and feeling the limits, Oz is worth exploring.

Try Warp Build today and get an extra 1000 Oz credits. ➞ https://oz.dev/tinanewsletter

🎥 This Week’s Video

🐙 Join the waitlist for Lonely Octopus if you’re interested in learning how to build AI agents!

P.S. All constructive feedback is greatly appreciated!

Do be careful with OpenClaw! As I've mentioned to you in emails, it is a massive security disaster waiting to happen (although there are security fixes to help minimize the problem.)

Just yesterday I read there are 135,000 OpenClaw installations open to the Internet - because it listens on all ports by default, and most users don't know how to change it.

Worse, there are still tons of "AI influencers" on YouTube recommending this thing WITHOUT telling people how to set it up securely (only a few influencers are.)

I will not touch this thing until I have a dedicated, locked-down separate machine (or VPS) to work on it. I have a separate mini-PC but I'm dedicating that to running a cybersecurity lab.

Here's a recent discussion on the topic with Shoshana Cox, who has a Substack that you should read:

The AISec Intelligence Brief

substack.com/@disesdi

From OpenAI Ads to Rogue Agents: AI’s Trust Collapse

https://www.youtube.com/watch?v=khqApWKMaAk

From the description:

Are AI agents getting dangerous before they get useful?

From OpenAI ads to rogue agents and Moltbot-style exploits, this Singularity Chat digs into AI’s emerging trust crisis and what comes next.

In this episode of @overthehorizon, I am joined by Shoshana Cox, Brian Wang, and Kian Konrad Tajbaksh for a wide-ranging discussion on where today’s AI trajectory is really heading.

The conversation spans three critical fault lines now emerging in the AI ecosystem.

📌 First, the rise of AI agents and Moltbot-style behaviour. What looks like hype on the surface masks a deeper issue around prompt injection, agent autonomy, and systems that can act in the world without reliable safeguards.

📌 Second, the growing concern around ads, bias, and incentives inside AI systems. As ads and monetisation enter the assistant layer, what happens to trust, neutrality, and user intent? Can AI still function as a reliable interface to knowledge?

📌 And finally, Elon Musk’s idea of “emulated humans”. Agents that do not rely on APIs but learn to operate software the way humans do. Is this a safer path to automation, or the next major leap in AI capability?

This is not a debate about sentience or science fiction. It is a grounded discussion about power, control, incentives, and the systems we are building right now.

If AI is becoming the new operating layer for society, this episode asks a simple question:

Who do we trust, and why?

Watched your latest livestream - great that you covered the security issues with OpenClaw!

I sent you an email suggesting that a topic for a future livestream might be an interview with a cybersecurity expert like Jason Haddix or John Hammond - or an AI cybersecurity expert like Shoshana Cox - that covers the intersection of agentic AI and cybersecurity might be very valuable for many people building this stuff.

We don't want them to be like the guy who created OpenClaw - who shipped AI generated code that he didn't even read!